Разделы презентаций

- Разное

- Английский язык

- Астрономия

- Алгебра

- Биология

- География

- Геометрия

- Детские презентации

- Информатика

- История

- Литература

- Математика

- Медицина

- Менеджмент

- Музыка

- МХК

- Немецкий язык

- ОБЖ

- Обществознание

- Окружающий мир

- Педагогика

- Русский язык

- Технология

- Физика

- Философия

- Химия

- Шаблоны, картинки для презентаций

- Экология

- Экономика

- Юриспруденция

Data analysis. Data management

Содержание

- 1. Data analysis. Data management

- 2. Data analysisis a process of inspecting, cleansing,

- 3. Data mining is a particular data analysis technique

- 4. Stage 1: Exploration.This stage usually starts with

- 5. Stage 2: Model building and validation. This stage

- 6. Stage 3: Deployment. That final stage involves using

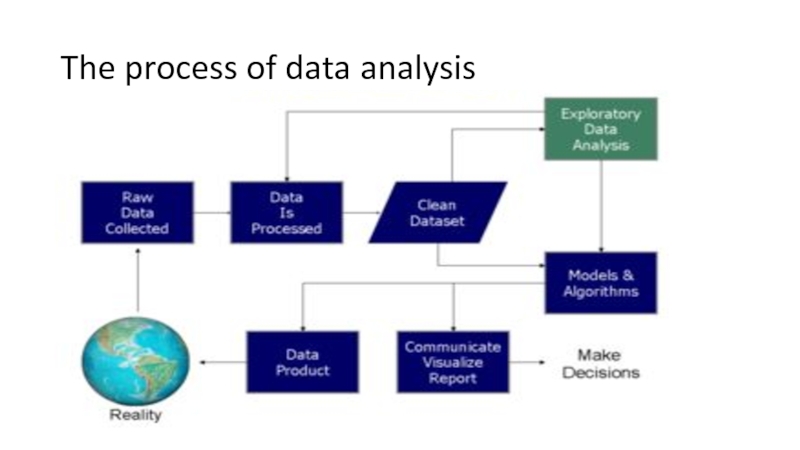

- 7. The process of data analysis

- 8. Data requirementsThe data are necessary as inputs

- 9. Data collectionData are collected from a variety

- 10. Data processingData initially obtained must be processed

- 11. Data cleaningOnce processed and organised, the data

- 12. Exploratory data analysisOnce the data are cleaned,

- 13. Modeling and algorithmsMathematical formulas or models called algorithms may

- 14. Data productA data product is a computer

- 15. CommunicationOnce the data are analyzed, it may

- 16. Free software for data analysisNotable free software

- 17. Скачать презентанцию

Data analysisis a process of inspecting, cleansing, transforming and modeling data with the goal of discovering useful information, informing conclusion and supporting decision-making.

Слайды и текст этой презентации

Слайд 3Data mining

is a particular data analysis technique that focuses on

statistical modeling and knowledge discovery for predictive rather than purely

descriptive purposes, while business intelligence covers data analysis that relies heavily on aggregation, focusing mainly on business information.Слайд 4Stage 1: Exploration.

This stage usually starts with data preparation which

may involve cleaning data, data transformations, selecting subsets of records

and - in case of data sets with large numbers of variables ("fields") - performing some preliminary feature selection operations to bring the number of variables to a manageable range (depending on the statistical methods which are being considered).Слайд 5Stage 2: Model building and validation.

This stage involves considering various

models and choosing the best one based on their predictive

performance (i.e., explaining the variability in question and producing stable results across samples).Слайд 6Stage 3: Deployment.

That final stage involves using the model selected

as best in the previous stage and applying it to

new data in order to generate predictions or estimates of the expected outcome.Слайд 8Data requirements

The data are necessary as inputs to the analysis,

which is specified based upon the requirements of those directing

the analysis or customers (who will use the finished product of the analysis). The general type of entity upon which the data will be collected is referred to as an experimental unit (e.g., a person or population of people). Specific variables regarding a population (e.g., age and income) may be specified and obtained. Data may be numerical or categorical (i.e., a text label for numbers).Слайд 9Data collection

Data are collected from a variety of sources. The

requirements may be communicated by analysts to custodians of the

data, such as information technology personnel within an organization. The data may also be collected from sensors in the environment, such as traffic cameras, satellites, recording devices, etc. It may also be obtained through interviews, downloads from online sources, or reading documentationСлайд 10Data processing

Data initially obtained must be processed or organized for

analysis. For instance, these may involve placing data into rows

and columns in a table format (i.e., structured data) for further analysis, such as within a spreadsheet or statistical software.Слайд 11Data cleaning

Once processed and organised, the data may be incomplete,

contain duplicates, or contain errors. The need for data cleaning

will arise from problems in the way that data are entered and stored. Data cleaning is the process of preventing and correcting these errors. Common tasks include record matching, identifying inaccuracy of data, overall quality of existing data, deduplication, and column segmentation.Слайд 12Exploratory data analysis

Once the data are cleaned, it can be

analyzed. Analysts may apply a variety of techniques referred to

as exploratory data analysis to begin understanding the messages contained in the data. The process of exploration may result in additional data cleaning or additional requests for data, so these activities may be iterative in nature. Descriptive statistics, such as the average or median, may be generated to help understand the data. Data visualization may also be used to examine the data in graphical format, to obtain additional insight regarding the messages within the data.Слайд 13Modeling and algorithms

Mathematical formulas or models called algorithms may be applied to

the data to identify relationships among the variables, such as correlation or causation.

In general terms, models may be developed to evaluate a particular variable in the data based on other variable(s) in the data, with some residual error depending on model accuracy (i.e., Data = Model + Error).Слайд 14Data product

A data product is a computer application that takes

data inputs and generates outputs, feeding them back into the

environment. It may be based on a model or algorithm. An example is an application that analyzes data about customer purchasing history and recommends other purchases the customer might enjoy.Слайд 15Communication

Once the data are analyzed, it may be reported in

many formats to the users of the analysis to support

their requirements. The users may have feedback, which results in additional analysis. As such, much of the analytical cycle is iterative.Слайд 16Free software for data analysis

Notable free software for data analysis

include:

DevInfo – a database system endorsed by the United Nations Development Group for

monitoring and analyzing human development.ELKI – data mining framework in Java with data mining oriented visualization functions.

KNIME – the Konstanz Information Miner, a user friendly and comprehensive data analytics framework.

Orange – A visual programming tool featuring interactive data visualization and methods for statistical data analysis, data mining, and machine learning.

Pandas – Python library for data analysis

PAW – FORTRAN/C data analysis framework developed at CERN

R – a programming language and software environment for statistical computing and graphics.

ROOT – C++ data analysis framework developed at CERN

SciPy – Python library for data analysis